Last May, I flew from Mauritius to Pittsburgh to attend PyCon US 2025. I had 2 layovers, namely in France and Detroit. That’s was a total duration of 24hrs.

But I managed to reach Pittsburgh safely in full 😀

Going to PyCon was easy using public transportation. I was so delighted to see the banners on the streets.

I volunteered in the registration desk. I made some good friends there. I hope to volunteer even more this year.

The talks were really interesting. They cater from total beginners to pro level.

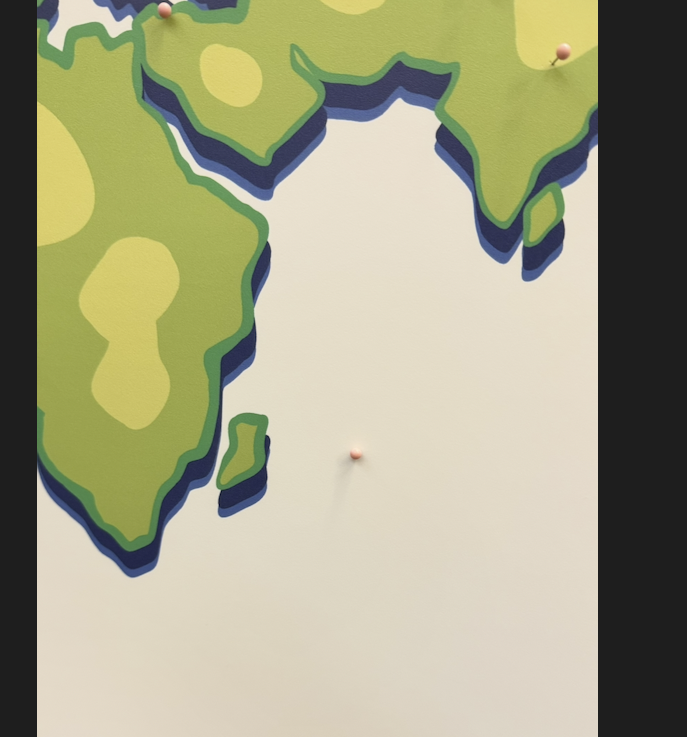

I added a pin marking the only Mauritian in the conference. In PyCon 2026, we will be at least 4 this time 😀

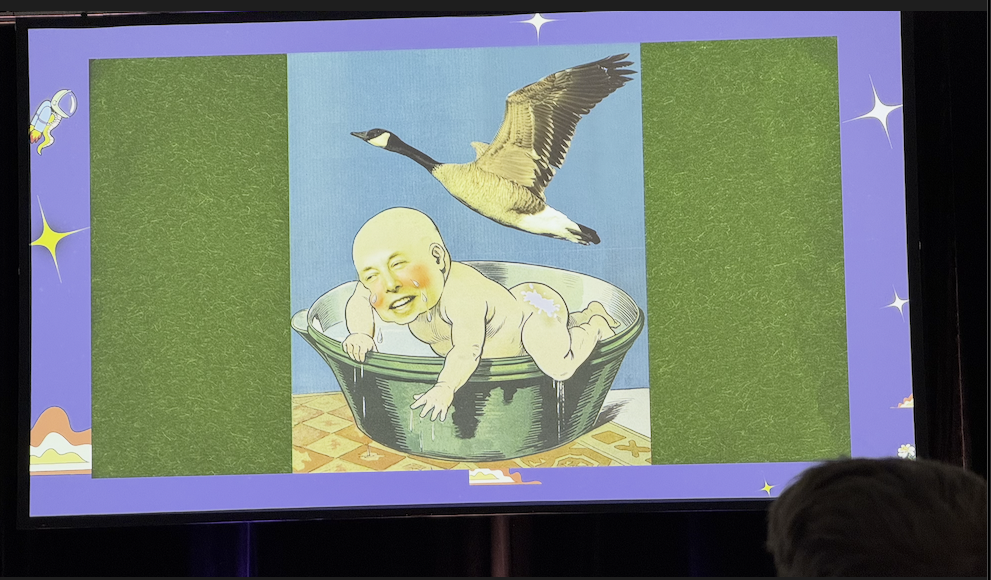

I was shocked to see keynote speakers openly bashing Elon Musk (whom I have great respect for) and even the sponsors of the event for being monopolies and anti-consumers sometimes. Is this why we call USA the land of the free? Free like in freespeech?

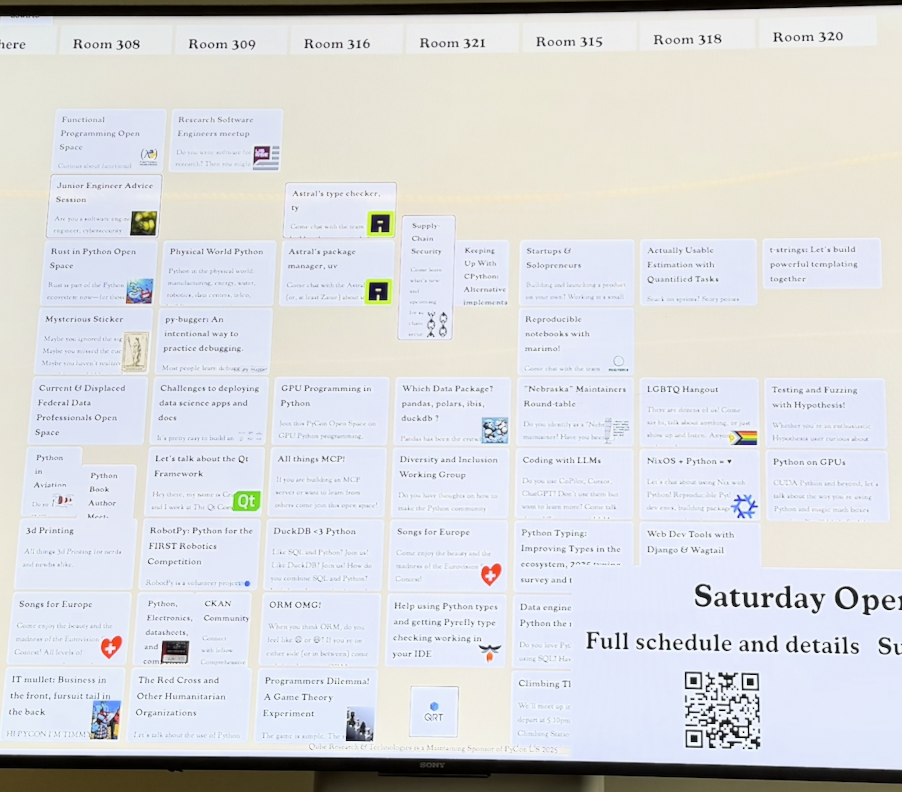

One of the gems of PyCon are hallway talks i think they call it. The topics range from tech to “how to write a book” to “what is consciousness?”. It is a great way to get to know and listen to other participants as well.

Alright. See you in PyCon 2026 🙂