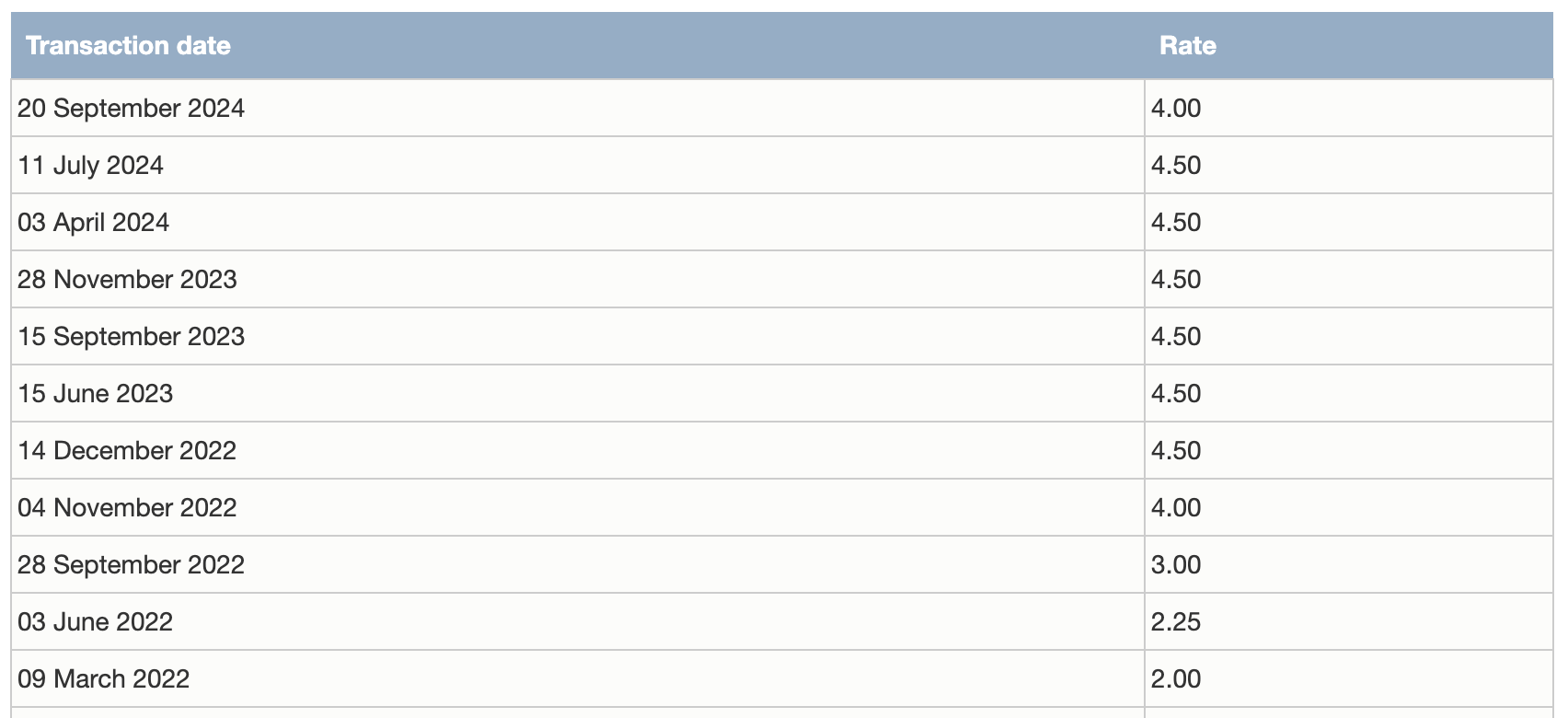

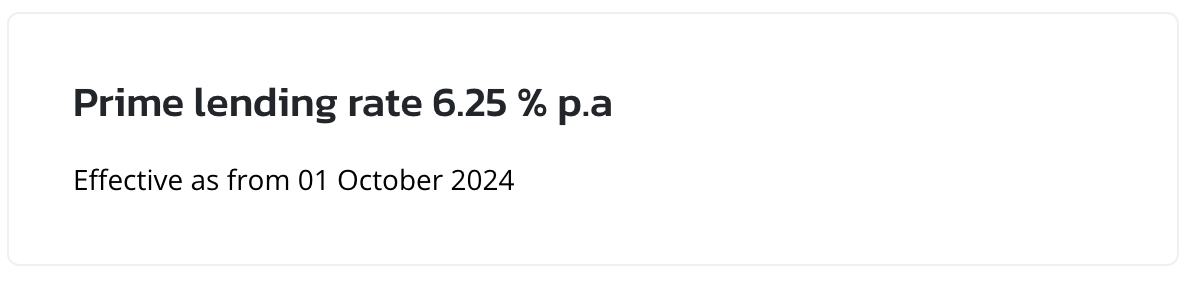

As from September 2024, the new rates came into effect.

Before starting to chant for your favourite politician, let’s see if its a good thing.

If you have lots of Money in the Bank?

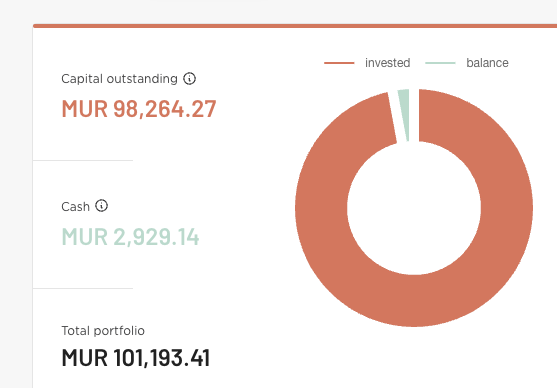

Bad news for you. You will receive less interests per year on your savings account. You will now receive Rs 26,000 instead Rs 31,000 on Rs 1 million Rupees in MCB. So your money isn’t going to grow as fast in the bank.

If you have a current home loan?

Good news for you mate if you have a floating interest loan. You will be paying less on interest charges now as the PLR has also decreased.

So yeah. You may chant.

For new home buyers?

If interest rate decreases, meaning you’ll be able to get higher loan amount to purchase your dream price right? RIGHT? Sorry but nope.

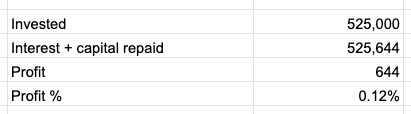

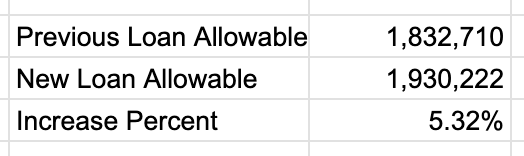

Let’s consider a person getting Rs 30,000 per month as salary. The bank would give a maximum loan of Rs 1,832,710 to that person if the interest rate 6.75% pa. The person can therefore make an offer of Rs 1.8 million to a house owner to purchase.

When the interest rate is decreased by 0.5%, the person will be allowable of a loan of Rs 1,930,222.

But you see. If you have money despite not doing anything, your colleagues also has more money to bid on the same house. In the end, you’ll end up paying the house Rs 1.9 millions. You didn’t make any “progress”.

Conclusion

Money is losing value.